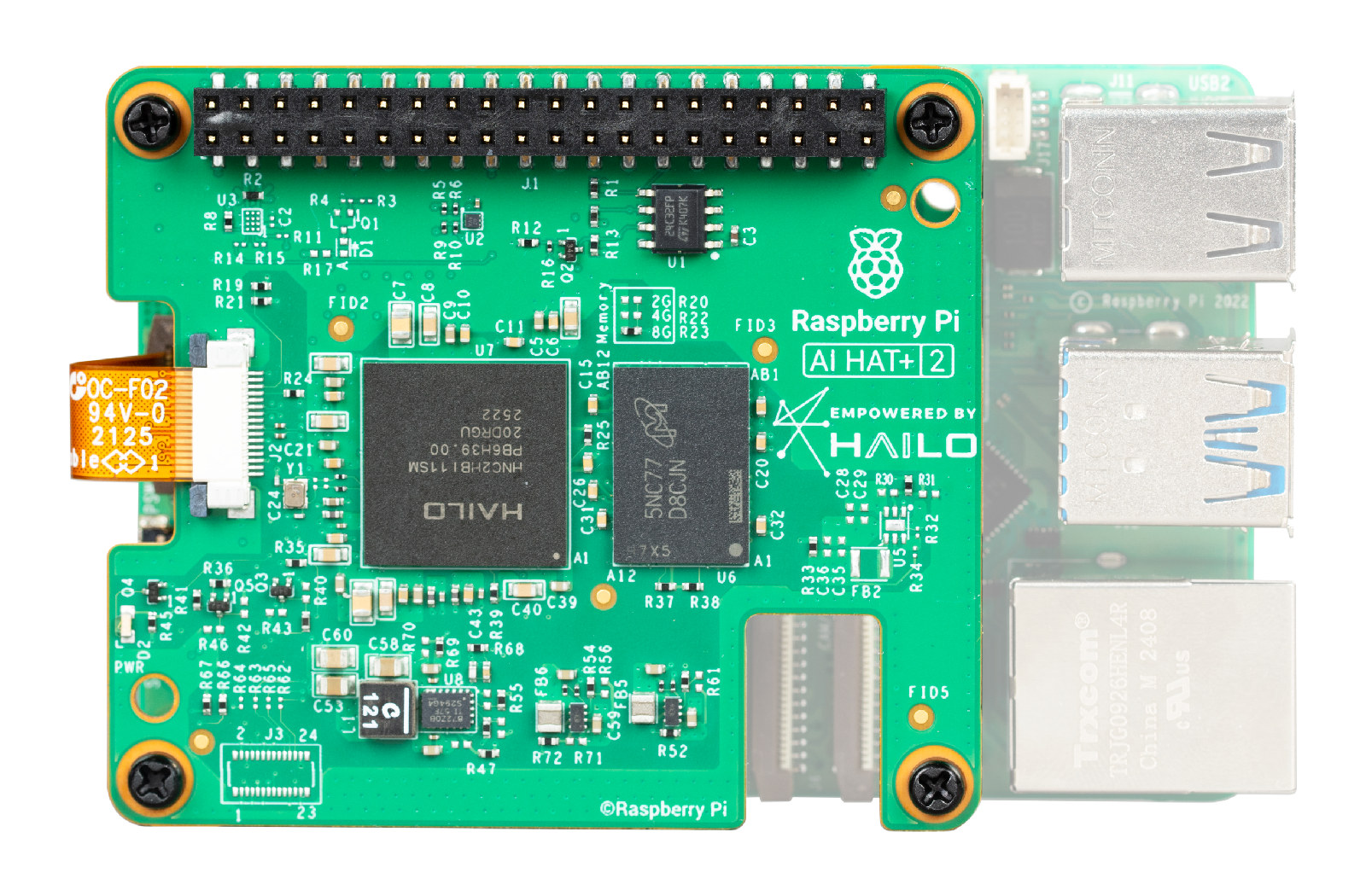

Raspberry Pi AI HAT+ 2: Run LLMs Locally for Just $130

The Raspberry Pi AI HAT+ 2 brings local LLMs to the edge with the Hailo 10H chip, 8GB dedicated RAM, and support for DeepSeek, Llama, and Qwen—all running offline at just 3 watts.

The Rise of Sovereign Edge AI

For years, the promise of Generative AI has been tethered to the cloud. This reliance often comes with significant hurdles: high latency, recurring subscription costs, and complex data privacy concerns. However, the landscape of edge computing just underwent a seismic shift. The release of the Raspberry Pi AI HAT+ 2 marks a turning point, offering a high-performance, low-power solution for running Large Language Models (LLMs) locally for a one-time hardware investment of just $130.

The ability to deploy models like DeepSeek, Llama, and Qwen on a device that fits in the palm of your hand—and consumes only 3 watts of power—opens unprecedented doors for industrial IoT, secure voice interfaces, and localized enterprise automation across any industry.

Technical Breakthrough: The Hailo 10H and 8GB Dedicated RAM

The core of the Raspberry Pi AI HAT+ 2 is the Hailo 10H AI Acceleration Module. While previous AI accelerators for the Pi focused primarily on computer vision and object detection, the AI HAT+ 2 is explicitly engineered for the generative era. It delivers up to 40 TOPS (Tera Operations Per Second) of performance, but the hardware's true innovation lies in its memory architecture.

Dedicated Memory for Large Models

Unlike standard NPU add-ons that share the host system's RAM, the AI HAT+ 2 features 8GB of dedicated LPDDR4x RAM. This is critical for LLM deployment. The bottleneck is rarely just compute—it's memory bandwidth and capacity. By providing 8GB of dedicated space, the HAT allows the Raspberry Pi 5 to load model weights entirely onto the accelerator, freeing up the Pi's resources for application logic.

- Chipset: Hailo 10H AI Processor

- Performance: 40 TOPS (INT4)

- Memory: 8GB Dedicated LPDDR4x RAM

- Power: ~3 Watts under typical workloads

- Interface: PCIe 3.0 via Raspberry Pi 5 FPC connector

- Price: $130

Supported Models: DeepSeek, Llama, and Qwen

The most compelling feature is verified support for modern LLM architectures. Through the Hailo Model Zoo and optimized software stacks, the AI HAT+ 2 runs quantized versions of leading open-source models:

DeepSeek-R1-Distill (1.5B)

DeepSeek has gained massive traction for its reasoning efficiency. The distilled R1 model runs smoothly on the AI HAT+ 2, perfect for coding assistance, chain-of-thought reasoning, and specialized tasks within a closed network.

Meta's Llama 3.2 (1B)

Llama 3.2 is the industry standard for general-purpose local AI. With 40 TOPS of power, the AI HAT+ 2 achieves usable token-per-second rates for real-time chat applications—viable for customer service kiosks or internal knowledge bases.

Alibaba's Qwen 2.5 Family (1.5B)

Qwen 2.5 includes Instruct and Coder variants, offering strong multilingual capabilities and code generation. Running Qwen locally ensures zero-latency responses with no API costs.

Why Run LLMs Locally?

1. Data Privacy and Security

When AI runs locally, data never leaves the device. There's no risk of sensitive data being used to train third-party cloud models. This "air-gapped AI" approach is essential for healthcare, finance, legal, and government applications.

2. Zero Latency

Cloud API calls introduce network latency. Local inference provides instant responses—critical for voice interfaces, real-time automation, and interactive applications.

3. No Recurring Costs

Cloud AI APIs charge per token. For high-volume use cases, costs add up quickly. The AI HAT+ 2 is a one-time $130 investment with unlimited local inference.

4. Offline Operation

Edge devices often operate in environments where internet is unreliable or unavailable. A 3-watt device powered by solar or battery can provide intelligent diagnostics and voice interfaces anywhere.

Practical Use Cases

- Local Voice Assistants: Process speech-to-text and LLM responses without cloud dependency

- Industrial IoT: Predictive maintenance and diagnostics at the edge

- Smart Retail: AI-powered kiosks that work offline

- Privacy-First Smart Offices: Meeting room assistants that don't transmit data externally

- Robotics: Embedded intelligence for autonomous systems

- Education: Affordable AI labs for schools and universities

Implementation Considerations

Running LLMs on edge hardware requires quantization—compressing model weights from 16-bit to 4-bit or 8-bit to fit within the 8GB memory envelope. This slightly reduces model capability, but the trade-off in speed, privacy, and cost is usually preferred for specific tasks.

Cooling is also important. Despite the low 3W power draw, the Raspberry Pi 5 and AI HAT+ 2 should be housed in an active-cooling enclosure for sustained inference sessions.

Getting Started

The AI HAT+ 2 is available now from official Raspberry Pi resellers. You'll need:

- Raspberry Pi 5 (8GB recommended)

- Up-to-date Raspberry Pi OS

- Active cooling solution

Check the official documentation and Hailo's GitHub for model downloads and setup guides.

Conclusion: The Future is Local

The Raspberry Pi AI HAT+ 2 isn't just a hobbyist tool—it's a professional-grade accelerator that democratizes local AI. For $130, you get a device that runs DeepSeek, Llama, and Qwen models completely offline, with no API costs, no latency, and full data privacy.

The era of sovereign edge AI has arrived. The question isn't whether to deploy AI locally—it's how fast you can get started.

Written by

Optijara AI